Why Are My Tests Failing?

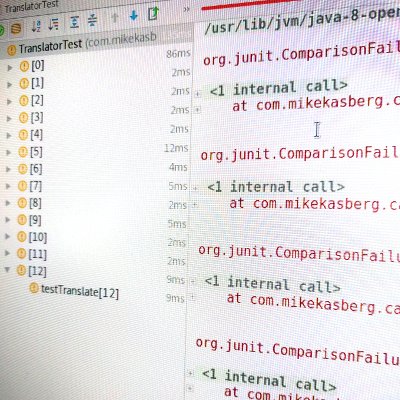

Have you ever tried to diagnose a test failure and had no idea what’s broken? Maybe you were looking at something like this:

Failed asserting that false is true.

Or, equally as bad:

java.lang.AssertionError

at ...

These are pretty bad failure messages. They have the bare minimum amount of information you might get from a failing test. They tell you something’s broken, and probably give you a line number or a stack trace, but that’s all. They don’t give you very much useful information. In the spirit of Google’s Testing on the Toilet, this is my own rant about one way you can make your tests better.

Failure messages like those above might have been produced by code like this:

@Test

public void testMyCollection() {

List<Integer> numbers = Arrays.asList(1, 3, 5, 7, 9);

assertTrue(numbers.contains(2));

}

The problem is that if assertTrue() fails, no additional information is

provided to the user.

Let’s see if we can clean that up a little. A simple approach is to just add a

failure message:

assertTrue("The list is missing the number 2.", numbers.contains(2));

That makes our test failure message a little more useful:

java.lang.AssertionError: The list is missing the number 2.

But that’s tedious. Nobody wants to write a message for every assertion. Also, there will be a tendency for the message to become outdated as the code is updated. Can we do better?

Matchers

Hamcrest is a framework that provides matchers for

various data types with nice failure messages. You call assertThat(actual,

matcher) and your object is compared to the Matcher. If the condition isn’t

fulfilled, you get a useful, easy-to-read failure message.

@Test

public void testMyCollection() {

final List<Integer> numbers = Arrays.asList(1, 3, 5, 7, 9);

assertThat(numbers, hasItem(2));

}

java.lang.AssertionError:

Expected: a collection containing <2>

but: was <1>, was <3>, was <5>, was <7>, was <9>

Matchers are great because they know how to produce a useful message when a condition isn’t fulfilled. The best part about Hamcrest is that matchers are reusable, and it comes with a lot of them. If it doesn’t have a Matcher you need, it’s easy to write your own! And Hamcrest isn’t the only framework that can do this. Other frameworks, like AssertJ, work in a very similar way.

More Complexity

So far, we’ve looked at a few quick examples, but it’s not always so simple. Suppose you have some integration tests for an API that are running code like this:

assertEquals(200, response.getStatus());

If that test fails, you’ll get a message that looks something like this:

java.lang.AssertionError:

Expected :200

Actual :422

OK, that’s a little useful… At least you know the API returned a 422 when it was supposed to return a 200. If you were trying to debug this, would you want any more information? Personally, I’d want to see the response body. It would save me a lot of time, since without it I’ll have to debug through this test or call the API manually to see what went wrong.

But how to you make the response body show up when the test fails? Hamcrest (at least as we’ve used it so far, without custom Matchers) won’t help you here, since you’re already getting a reasonable message for the integer comparison. What we need is to add more info to the message. That’s easy enough:

assertEquals(response.getBody(), 200, response.getStatus());

That’s better. Now, we can see the body of the HTTP response in our failing test:

java.lang.assertionError: ID is a required parameter, but no ID was provided.

Expected :200

Actual :422

This message gives us much more information about what is wrong with the system we’re testing. It saves us time when we’re trying to debug a problem, and that can be incredibly valuable when it helps your team iterate quicker. If you find yourself doing things like this often, it might make sense to look for a library to help with it, or write your own matcher that can automatically parse the response body (or status code, headers, etc.) out of an HTTPResponse.

What we’ve talked about so far is only the tip of the iceberg. What kind of failure message is most useful when comparing large blobs of JSON? CSVs? Selenium pages? As you include more matchers in your codebase, patterns will begin to emerge. You’ll learn what information is most relevant for particular types of failures, and you’ll make that information quickly available. Over time, these patterns can help you transform your test suite into a really powerful test harness that matches perfectly with the unit you’re testing.

So What?

So what’s the point?

Tl;dr: Make sure your unit and integration tests print useful information when they fail. It will save you time in the long run.

That sounds like it’s really simple, but it’s easy to forget about it when you just want to write a quick test and move on to the next piece of code. TDD can help with this - if you watch a test fail before you watch it pass, you have a great opportunity to improve the failure message if it needs more info.

We can break all of this down into a few simple points:

- Always include some a useful failure message with your tests.

- Always watch your tests fail (not just pass!) at least once, so you can see the message that a failure produces and determine if it is useful.

- It’s fine to use normal Assert methods, like

Assert.assertEquals(expected, actual), if you like them and they produce useful messages for you. - Use Hamcrest matchers if there’s no good assertion method. If you can’t find a Hamcrest matcher that you need, write your own (by subclassing BaseMatcher or one of its children).

- If it’s too complicated to write a matcher or you don’t plan on reusing it,

you can always fall back to

assertTrue(message, condition)with a useful message.

About the Author

👋 Hi, I'm Mike! I'm a husband, I'm a father, and I'm a staff software engineer at Strava. I use Ubuntu Linux daily at work and at home. And I enjoy writing about Linux, open source, programming, 3D printing, tech, and other random topics. I'd love to have you follow me on X or LinkedIn to show your support and see when I write new content!

I run this blog in my spare time. There's no need to pay to access any of the content on this site, but if you find my content useful and would like to show your support, buying me a coffee is a small gesture to let me know what you like and encourage me to write more great content!

You can also support me by visiting LinuxLaptopPrices.com, a website I run as a side project.

Related Posts

- Testing is Hard 28 Jan 2017

- Why CI? 19 Jun 2017

- When's My Code Going Out? 30 Apr 2017